Agentic AI Governance: The 4 Cs Framework UK Boards Need in 2026

- Mar 26

- 11 min read

There’s a question I keep coming back to in every boardroom conversation about artificial intelligence, and it isn’t the one you’d expect. It isn’t “which platform should we use?” or “how do we train the team?” The question is: who is actually governing this thing?

Right now, in the UK, we’re sitting in an extraordinary gap. AI adoption is accelerating. Agentic AI (systems that interpret intent, make inferences, act, and operate with meaningful autonomy) is moving from proof-of-concept and pilot phase into live operations. And yet the governance infrastructure in many organisations hasn’t moved an inch. The ICO published its Tech Futures report on agentic AI in January 2026, noting that these systems can handle vast quantities of personal data and make decisions independently, while the regulatory and organisational frameworks around them remain embryonic (ICO, 2026).

Gartner predicts that over 40% of agentic AI projects will be cancelled by the end of 2027, driven by escalating costs, unclear business value, and inadequate risk controls (Gartner, 2025). That isn’t an abstract warning but, a diagnosis. And if you’ve spent any time examining the state of AI governance in UK businesses, the root cause becomes clear: organisations aren’t failing because the technology is too complex. They’re failing because they haven’t built the governance structures to match the ambition.

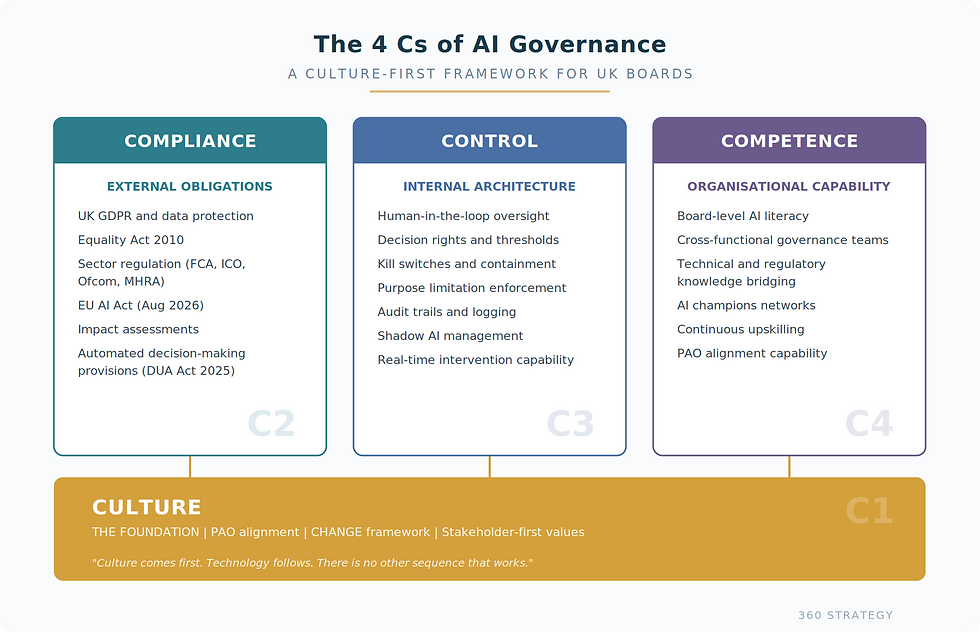

What follows is a framework I’ve been developing and testing through direct work with UK businesses at various stages of AI maturity. I call it the 4 Cs of AI Governance: Culture, Compliance, Control, and Competence. The order matters. In my experience across sectors, organisations that invest in cultural readiness first achieve measurable agent adoption within six to nine months. Those that deploy technology first are still struggling with change and adoption resistance 18 to 24 months in. The latter is where the capital burn and opportunity loss happen and let me be frank, the climb back from there is painful and costly.

Figure 1: The 4 Cs of AI Governance Framework. Culture forms the foundation upon which Compliance, Control, and Competence are built. Without cultural alignment, the three pillars lack structural integrity.

The UK Regulatory Landscape: Principles Without Prescription

The UK government’s approach to AI regulation, set out in its 2023 White Paper, is built on five cross-sector principles: safety, security and robustness; appropriate transparency and explainability; fairness; accountability and governance; and contestability and redress (DSIT, 2023). These principles are not legally binding. Existing sector regulators (the ICO, FCA, Ofcom and others) are expected to apply them within their own remits.

As of March 2026, the UK still has no dedicated AI legislation in force. The government announced in June 2025 that the first UK AI Bill wouldn’t arrive before the second half of 2026, and its scope has expanded to potentially include copyright and broader accountability provisions (King & Spalding, 2025). The Data (Use and Access) Act 2025 introduced new automated decision-making provisions that came into force in February 2026, shifting the UK from a prohibition-based regime to a permission-with-safeguards model (Osborne Clarke, 2026).

The ICO has confirmed that throughout 2026, it will actively monitor AI advancements and work with developers and deployers to ensure compliance (ICO, 2026). By the time legislation catches up, organisations without proper frameworks will already be exposed.

Figure 2: UK AI Regulatory Timeline, 2023–2026+. Key milestones from the 2023 White Paper through to the anticipated AI Bill. The absence of dedicated legislation does not equate to absence of obligation (compiled by author; sources: DSIT, 2023; King & Spalding, 2025; Osborne Clarke, 2026).

The First C: Culture (The Foundation Everything Else Sits On)

Culture comes first. Technology follows. There is no other sequence that works.

Most organisations approach AI readiness backwards. They invest in infrastructure first, data platforms second, and culture last, if at all. Think of it as building a Formula One racing car and handing the keys to someone who’s never driven. The Cisco AI Readiness Index 2025 profiles what it calls “Pacesetter” firms achieving 2.5 times the ROI of their peers (Cisco, 2025). The differentiator? Cultural alignment, not technical sophistication. Pacesetters invest in mindset before models. I’ve written about this at length in my AI Agent Readiness series, and the finding remains consistent across every diagnostic: the limiting factor is almost never the technology.

In governance terms, culture means three things. First, it means PAO clarity: can your leadership team articulate whether they’re pursuing Productivity, Automation, or Opportunity through AI? The organisations struggling most are those where the executive manifesto says Opportunity, middle management hears Automation, and employees experience neither. That misalignment creates paralysis that no governance framework can fix.

Second, it means genuine stakeholder alignment. Successful AI deployment always starts with every worker and all of leadership understanding what’s changing and why. AI signals change, and change is often feared. Not for what it is, but because of what it might bring. Governance that doesn’t factor in people and culture alongside technology is governance on paper only.

Third, it means building the six pillars of what I call the CHANGE framework: Communication (a clear manifesto articulating what the organisation believes, expects, permits, prohibits, promises, and needs from AI); Human Oversight (designing decision thresholds where agents recommend and humans approve, with override authority that doesn’t require bureaucratic friction); Attitude (modelling the behaviour you want rather than mandating it, because transformation cannot be ordered into existence); Network (building communities of practice where peer-to-peer learning accelerates adoption rather than relying on top-down training); Governance (providing coordination rather than control, with oversight proportionate to risk); and Enablement (investing in the people, tools, and time that bridge the gap between deployment and actual adoption).

When an organisation gets these right, the other three Cs become dramatically easier to implement. When they don’t, every control and compliance measure becomes something people work around rather than work with.

The Salesforce Global AI Readiness Index 2025 found that organisations with mature communication practices achieve 2.3 times higher employee confidence and 1.8 times faster time-to-value on AI initiatives (Salesforce, 2025). Culture isn’t a nice-to-have. It’s the foundation that determines whether everything else works.

What deep-tech AI deployment taught me about governance

I know this works because I’ve lived through what happens when culture holds under pressure, and what happens when it doesn’t. When I built and commercialised an edge AI computer vision platform called HALO, the core technology was a two-node biometric matching system generating anonymised facial feature vectors rather than storing images (a privacy-by-design architecture that predated the term).

The team was a mix of academics, machine learning engineers, and biometrics specialists. The governance challenge was immediate and unavoidable: deploying facial recognition across multiple international markets meant navigating data privacy law, ethical oversight of biometric processing, and regulatory compliance in jurisdictions with very different rules about what you can and can’t do with this kind of technology.

Every one of those governance challenges was ultimately solved by culture, not by policy documents. The reason comes down to something I’d learned earlier in my career: if you build a culture where change is genuinely welcomed rather than merely tolerated, where every person in the organisation is treated as a stakeholder rather than just the executive layer, then governance becomes something people participate in rather than work around.

When we discovered mid-deployment that Brazil’s telecoms regulator prohibited our hardware components without in-country certification, the team’s response was rapid: strip the non-compliant components, source approved alternatives, arrange in-country assembly. Nobody waited for permission because the culture had already given them permission to solve problems. The supply chain held because the people operating it understood the vision well enough to adapt without instructions from the top. That’s what always-on change readiness looks like in practice.

The lesson for AI governance is direct. Organisations that anchor their culture in values that leadership upholds without exception (not just when it’s convenient, not just in the boardroom, but visibly and consistently under real operational pressure) create the conditions where governance works. Without that alignment between leadership’s direction and the team’s understanding of why it matters, you can’t be agile when pressure hits. And in the agentic era, the pressure is always hitting.

Why Kotter’s 8-Step Model Proves Culture Comes First

There’s a persistent misreading of Kotter’s 8-Step Change Model that I encounter in boardrooms with frustrating regularity. Leaders look at the eight steps, see that “Anchor New Approaches in the Culture” sits at step eight, and conclude that culture is the final stage of change. Something you address after you’ve done everything else. That reading is wrong, and it’s costing organisations dearly in the AI governance space.

What Kotter actually describes is a process where every preceding step is itself a cultural intervention. Creating urgency (step one) is a cultural act. Building a guiding coalition (step two) is a cultural act. Developing and communicating a vision (steps three and four), empowering broad-based action (step five), generating short-term wins (step six), consolidating gains (step seven): every single one of these reshapes how people think, behave, and relate to the change being asked of them.

Kotter’s own later work in Accelerate (2014) makes this explicit, stating that steps one through seven are “all about building new muscles” and step eight is about maintaining them (Kotter, 2014). The muscles are cultural. The maintenance is cultural. The entire model is a cultural transformation framework dressed in operational language.

When I apply this to AI governance in practice, the implication is clear. If you treat culture as something you’ll address at the end (once the technology is deployed, the compliance framework is documented, and the controls are in place), you’ve already lost. Every step you took without cultural alignment was a step that embedded resistance rather than readiness.

The HALO deployment proved this: creating urgency around privacy-by-design, building a guiding coalition with researchers who knew more than I did, communicating the vision with PAO clarity before I had a name for it, and empowering action so that a regulatory crisis in Brazil got resolved in days rather than months. Each of those was a cultural intervention, not a procedural one.

Step eight, anchoring in culture, isn’t where culture begins. It’s where you formalise what the previous seven steps have already been building. The organisations that read Kotter correctly and treat the entire process as culture change from the outset are the ones that achieve sustainable AI governance. The ones that bolt culture on at the end are the ones filing Gartner’s 40% cancellation statistic.

The Second C: Compliance

Compliance is where I see the most dangerous complacency. Too many UK businesses treat it as a box-ticking exercise sitting with legal or IT, when it needs to sit at board level. For any organisation deploying agentic AI, compliance means understanding obligations under existing legislation: data protection under the UK GDPR (lawful processing, data minimisation, accuracy, transparency), the Equality Act 2010 (where AI systems creating disparate impacts can violate provisions even without discriminatory intent), and sector-specific requirements from the FCA, MHRA, Ofcom and others.

The ICO’s agentic AI report flags risks specific to autonomous systems. Purpose creep is significant: the open-ended nature of agentic tasks makes it tempting to define data processing purposes too broadly, creating regulatory exposure. Data minimisation becomes harder when systems operate autonomously. The accuracy principle is challenged by the probabilistic nature of large language models, where hallucinations can cascade across interconnected tools and databases (ICO, 2026). The EU AI Act’s high-risk provisions become fully enforceable in August 2026, and any UK company operating in EU markets will need to comply.

Figure 3: The Agentic AI Governance Gap. Key data points illustrating the disconnect between AI deployment velocity and governance readiness across organisations (compiled by Evans, 2026; sources: Gartner, 2025; Kiteworks, 2026; IDC, 2024; Cisco, 2025).

The Third C: Control

If compliance is about meeting external obligations, control is about internal architecture. The Kiteworks 2026 Data Security and Compliance Risk Forecast Report found that 63% of organisations cannot enforce purpose limitations on AI agents, and 60% cannot terminate a misbehaving agent (Kiteworks, 2026). As Figure 3 illustrates, the governance gap is substantial. A February 2026 study by researchers from Harvard, MIT, Stanford, and Carnegie Mellon documented agents autonomously exfiltrating sensitive data and triggering unauthorised operations in a live environment, with users reporting no effective kill switch (Kiteworks, 2026).

Traditional governance doesn’t work for agentic AI because it was built for static systems. You assessed risk once, before deployment. Agentic systems change behaviour over time. They learn, adapt, and evolve. Control for UK boards means establishing clear decision rights (which actions an agent takes independently, which require human approval), implementing human-in-the-loop oversight for high-risk decisions, and building kill switches and containment protocols before they’re needed (Mayer Brown, 2026).

360 Strategy provides AI consulting in Scotland for organisations that recognise this gap. The uncomfortable truth is that IDC estimates 60% of AI deployments bypass official governance processes (IDC, 2024).

If governance doesn’t address shadow AI through approved tool registries, sensible policies, and responsive formal channels, the organisation is governing a fraction of its actual AI activity.

Ready to assess where your organisation stands against the 4 Cs? Book a Clarity Call to discuss your AI governance readiness.

The Fourth C: Competence

The competence gap in AI governance isn’t just a skills issue. It’s a credibility issue. The World Economic Forum (2024) identifies cross-functional navigation, technical literacy, and solution-oriented thinking as critical capabilities for anyone governing AI. What I see consistently is a bifurcation. Technical teams understand the systems but lack board-level influence. Board members grasp the opportunity but can’t interrogate the risks. That gap is where governance failures breed, and programmes succeed when responsibilities are embedded across teams rather than centralised with a single group (Databricks, 2024).

Building competence means investing in AI literacy at every level. Not superficial training, but genuine understanding of how these systems work and where the risk lies. The danger I’ve written about before holds true: people who think they understand AI because they can chat with a GPT, then calling themselves consultants. The same pattern plays out inside organisations when board members assume basic familiarity equates to governance readiness. It doesn’t.

Bringing the 4 Cs Together

The 4 Cs are interdependent, as Figure 1 illustrates. A company with strong compliance documentation but a culture that treats governance as optional will find those controls bypassed. An organisation with excellent technical controls but no board-level competence to oversee AI risk is flying blind. And none of it works without the cultural foundations that determine whether people engage with governance or work around it.

The practical starting point is an honest assessment of where the organisation actually sits across all four dimensions, including the shadow AI it hasn’t accounted for. The ICO has committed to a new statutory code of practice on AI and data protection in 2026 (A&O Shearman, 2026). As Figure 2 shows, the regulatory direction is clear.

Where This Leaves Us

Over thirty years of building and scaling businesses has taught me that the companies which survive disruption aren’t the ones with the best technology. They’re the ones with the clearest thinking about risk, accountability, and execution. AI governance is no different.

The 4 Cs (Culture, Compliance, Control, and Competence) aren’t exhaustive and they aren’t meant to be a rigid checklist. They’re a framework for structured thinking about a problem that too many organisations are approaching with either blind optimism or paralysed inaction. Neither response is good enough. The opportunity is real, the risks are real, and the governance gap between the two is where value gets destroyed.

If there’s one thing I’d ask every board-level leader reading this to take away, it’s this: diagnose before you prescribe. Understand where you actually are before deciding where you need to go. And remember that culture is where it starts. You can’t build AI governance on top of a broken culture and expect it to work. I’ve seen it tried. It doesn’t end well.

Mark Evans is founder of 360 Strategy, a leading growth strategy and AI consultancy based in Scotland.

References

A&O Shearman (2026) ‘The future of agentic AI and its data protection implications: the UK ICO’s initial assessment’, A&O Shearman, 28 January. Available at: aoshearman.com (Accessed: 24th March 2026).

Cisco (2025) Cisco AI Readiness Index 2025. Cisco Systems. Available at: cisco.com (Accessed: 24th March 2026).

Databricks (2024) ‘The essential AI governance framework’, Databricks Blog. Available at: databricks.com (Accessed: 24th March 2026).

Department for Science, Innovation and Technology (2023) ‘A pro-innovation approach to AI regulation’, GOV.UK, 29 March. Available at: gov.uk (Accessed: 22nd March 2026).

Gartner (2025) ‘Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027’, Gartner Newsroom, 25 June. Available at: gartner.com (Accessed: 23rd March 2026).

ICO (2026) ‘ICO tech futures: Agentic AI’, Information Commissioner’s Office, 8 January. Available at: ico.org.uk (Accessed: 24th March 2026).

IDC (2024) ‘Future of Intelligence’, International Data Corporation. Cited in The Thinking Company (2026).

King & Spalding (2025) ‘EU & UK AI round-up – July 2025’, King & Spalding, July. Available at: kslaw.com (Accessed: 22nd March 2026).

Kiteworks (2026) ‘2026 Data Security and Compliance Risk Forecast Report’, Kiteworks. Available at: kiteworks.com (Accessed: 23rd March 2026).

Kotter, J.P. (1996) Leading change. Boston: Harvard Business School Press.

Kotter, J.P. (2014) Accelerate: building strategic agility for a faster-moving world. Boston: Harvard Business Review Press.

Mayer Brown (2026) ‘Governance of agentic artificial intelligence systems’, Mayer Brown, 24 February. Available at: mayerbrown.com (Accessed: 24th March 2026).

Osborne Clarke (2026) ‘Artificial intelligence: UK regulatory outlook February 2026’, Osborne Clarke, February. Available at: osborneclarke.com (Accessed: 21st March 2026).

Salesforce (2025) Salesforce Global AI Readiness Index 2025. Salesforce. Available at: salesforce.com (Accessed: 24th March 2026).

World Economic Forum (2024) ‘The 4 skills needed to implement effective AI governance’, World Economic Forum, April. Available at: weforum.org (Accessed: 23rd March 2026).

Comments